The question universities keep asking is how to stop students using AI to cheat. It’s a reasonable question. It’s also probably the wrong one.

When a student in Johannesburg or Nairobi submits an AI-generated essay, the instinct is to treat it as an ethics problem — a shortcut taken, a rule broken. What’s harder to sit with is the possibility that the student made a perfectly rational calculation. That the assignment, as designed, invited exactly this response. That the curriculum, long before AI arrived, had already communicated something important: your presence here is not particularly necessary.

Aslam Fataar, writing recently about pedagogy and AI in South African higher education, comes closer to this than most. His argument — that universities need pedagogical imagination rather than surveillance and compliance machinery — is worth taking seriously. Plagiarism detection software, AI disclosure policies, redesigned rubrics. Each one a patch. Each one leaving the underlying structure untouched.

The philosopher Slavoj Žižek has a name for this tendency, borrowed from astronomy. When Ptolemy’s Earth-centred model of the cosmos kept producing wrong predictions, the response wasn’t to question the model. It was to add epicycles — mathematical workarounds that preserved the framework while absorbing the anomaly. Universities have been adding educational epicycles for years. AI has simply made the process more visible.

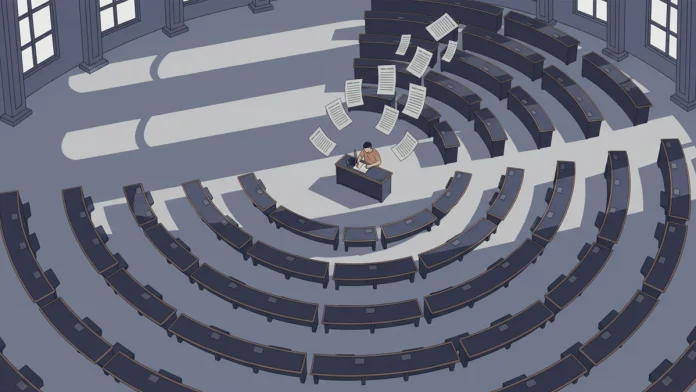

What the framework actually produces, if you follow the language universities use about themselves, is striking. Graduate attributes. Throughput rates. Time-to-degree. Students tracked as inventory moving through stages. The dynamic, genuinely unpredictable process of a person developing intellectually, reduced to a checklist of quantifiable outputs. When the system is built this way, AI doesn’t subvert it. It fits perfectly.

The more interesting argument — and the one Fataar gestures toward without quite arriving at — is that assessment has rarely measured authentic learning anyway. It has measured the performance of learning. An ability to produce, on demand, the correct shape of understanding. AI didn’t break that model. It just made the performance available to everyone, cheaply, overnight.

What genuine transformation might look like is harder to describe than to sense. Some educators talk about curriculum as a process of self-authentication — not romantic, not soft, but genuinely demanding in ways that can’t be outsourced. Can you see this student’s presence in the work? Can you see where they’ve struggled, where their thinking shifted, where they’ve staked something? AI-generated work becomes identifiable not because software flags it but because nobody is becoming themselves through it. No journey is being made.

There’s a larger problem sitting behind all of this that gets less attention. If credible projections about Artificial General Intelligence arriving within the next few years are even roughly right, the employability conversation collapses entirely. AGI will outperform graduates at most of what universities currently train people to do. Preparing students to compete in that race starts to look less like education and more like a very expensive misdirection.

Hannah Arendt wrote in 1958 about the prospect of a society of labourers without labour. She was thinking about automation. The question her warning raises for universities now — what education is actually for when economic necessity is removed from the equation — is one the current framework has no answer to.

Whether it can develop one before the framework becomes irrelevant is worth watching.