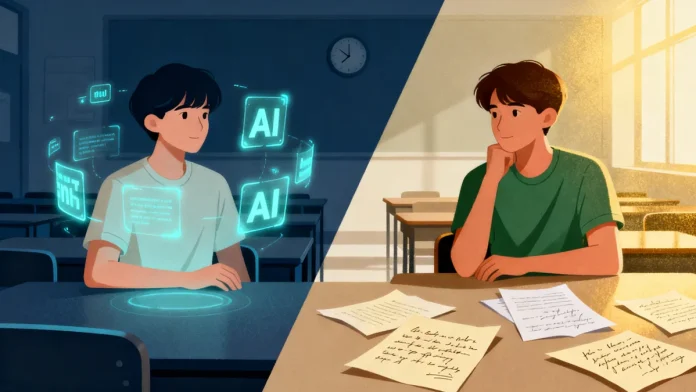

On a Tuesday afternoon in Boston, a professor reads two essays that could have come from the same careful mind. Clean structure. Confident tone. Neatly woven arguments. Later, one student admits to leaning heavily on AI. The other swears every sentence was hard-won. On the page, there’s no obvious difference.

Scenes like this are becoming ordinary. Not dramatic. Just quietly unsettling.

For centuries, universities worked on a simple assumption: learning changes the learner. You enter unsure, you wrestle with ideas, you leave altered in ways that are difficult to fake. The process was slow. Apprenticeship, revision, long conversations in offices with books stacked against the walls. Even when technology reshaped access to knowledge — from the printing press to the personal computer — the core rhythm held. Effort over time produced growth.

Generative AI unsettles that rhythm. It doesn’t just speed things up. It rearranges the sequence. Drafts appear instantly. Literature reviews take minutes. Arguments arrive polished, often better structured than what a tired student might manage at 2 a.m. The visible struggle that once signaled intellectual effort becomes optional.

In the United States, this tension is impossible to ignore. Elite universities partner with tech firms, compete for AI research funding, and encourage students to master the tools shaping the economy. Walk across a campus in California or Massachusetts and you’ll hear talk of startups, model optimization, national competitiveness. AI isn’t an experiment. It’s infrastructure.

At the same time, faculty quietly redesign syllabi. More in-class writing. More oral exams. Fewer take-home essays. Not out of nostalgia, but because they need to see thinking happen in real time. One dean recently described the mood as “curious but wary.” Nobody wants to fall behind. Nobody wants to hollow out the degree either.

In South Korea, the pressure feels sharper. AI has been woven into national growth strategy. Universities race to launch new majors, industry partnerships, research centers. Students know exactly what’s at stake in a competitive job market. Speed matters. Output matters. Rankings matter.

In classrooms shaped by an already intense exam culture, AI becomes a natural accelerator. Assignments get done faster. Presentations look sharper. But some professors describe a subtle change in discussion. Students arrive with tidy summaries generated beforehand. The conversation starts at synthesis and skips the confusion. Without confusion, there’s less friction. Without friction, it’s harder to see growth.

This is not a moral panic about students using tools. Most educators admit AI can reduce routine labor and widen access to structured thinking. The deeper unease is about time. Organic learning has always relied on it — the stalled paragraph, the failed draft, the uncomfortable silence before insight forms. Time isn’t decorative. It does the shaping.

Synthetic systems compress that shaping. They close the gap between question and answer. In a culture already obsessed with efficiency, the slower work of reflection begins to look indulgent.

So universities face a choice that feels less technical than philosophical. Are they certifying output, or are they certifying judgment? If the former, machines are getting very good at the job. If the latter, then the messy, inefficient stretch of development still matters.

The stakes aren’t only academic. Universities feed into economies, governments, public debate. If they begin to reorganize themselves around productivity alone, they may produce graduates who are fluent but less practiced in uncertainty.

Perhaps the most radical move now is not banning tools or embracing them wholesale. It may be insisting that some parts of thinking cannot be rushed. In an age defined by speed, defending time sounds almost quaint. Yet the kind of mind that forms in protected slowness may be precisely what an accelerated world quietly needs.